Rhel 1 2 0 wz-n- <14.50g 0 <- test_vg no more visible ~]# pvs Swap rhel -wi-ao- 956.00m /dev/sda2(0) <-Our Logical volume no more visible ~]# vgs Next you can verify that all the logical volumes, volume groups and physical volume part of /dev/sdb is missing from the Linux server ~]# lvs -o+devices To restore lvm metadata stored in the file system signature from the backup we can use dd if=~/wipefs-sdb-0x00000218.bak of=/dev/sdb seek=$((0x00000218)) bs=1 conv=notrunc wipefs does not erase the filesystem itself nor any other data from the device. wipefs can erase filesystem, raid or partition-table signatures (magic strings) from the specified device to make the signatures invisible for libblkid. To manually delete LVM metadata in Linux you can use various tools such as wipefs, dd etc. How to manually delete LVM metadata in Linux? Next un-mount the logical volume ~]# umount /test/ ~]# mkdir ~]# mount /dev/mapper/test_vg-test_lv1 /test/Ĭreate a dummy file and note down the md5sum value of this file ~]# touch ~]# md5sum /test/fileĭ41d8cd98f00b204e9800998ecf8427e /test/file We will put some data into our logical volume to make sure there are no data loss after we recover LVM2 partition, restore PV and restore VG using LVM metadata in the next steps.

Test_lv1 test_vg -wi-a- 1.00g /dev/sdb(0) <- new Logical Volume LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert Devices Similarly you can see the new logical volume test_lv1 is mapped to /dev/sdb device ~]# lvs -o+devices Here as you see test_vg is mapped to /dev/sdb ~]# vgs -o+devices

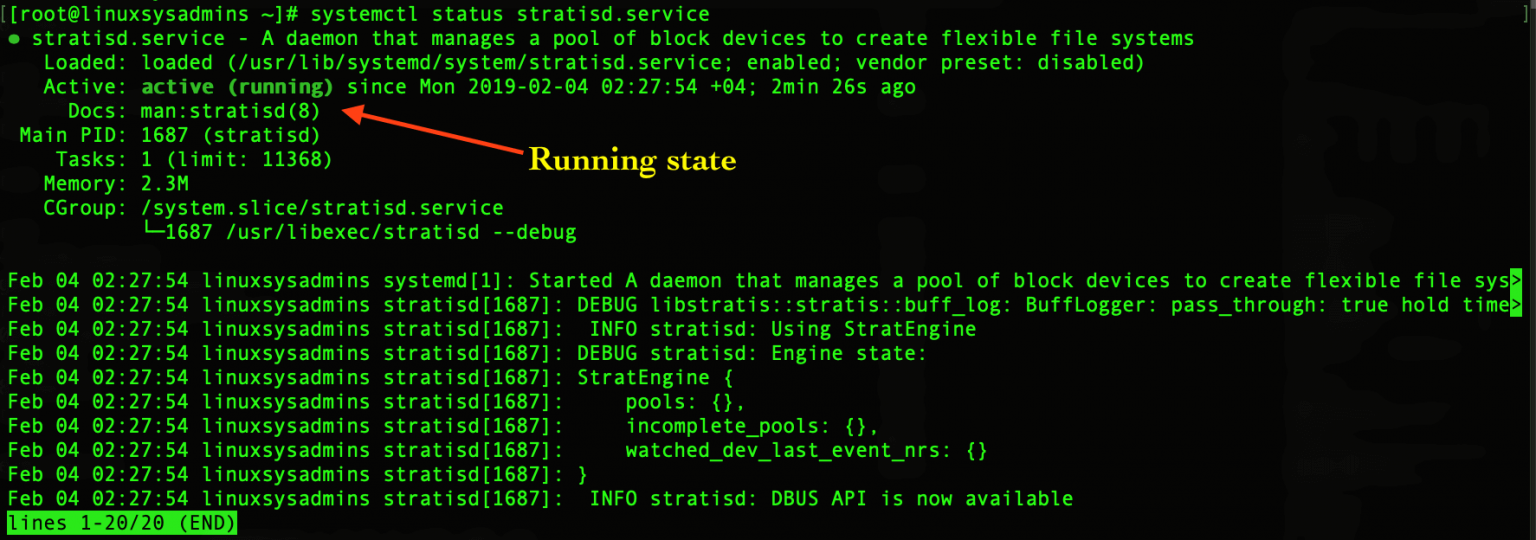

List the available volume groups along with the mapped storage device. Writing superblocks and filesystem accounting information: done Test_vg 1 0 0 wz-n- <8.00g <8.00g <- new VGĬreate a new logical volume test_lv1 under our new volume group test_vg ~]# lvcreate -L 1G -n test_lv1 test_vgĬreate ext4 file system on this new logical volume ~]# mkfs.ext4 /dev/mapper/test_vg-test_lv1Ĭreating filesystem with 262144 4k blocks and 65536 inodesįilesystem UUID: c2d6eff5-f32f-40d4-88a5-a4ffd82ff45a I currently have two volume groups wherein rhel volume group contains my system LVM2 partitions ~]# vgs List the available volume groups using vgs. Volume group "test_vg" successfully created Next create a new Volume Group, we will name this VG as test_vg. manually delete the LVM metadata and then steps to recover LVM2 partition, restore PV, restore VG and restore LVM metadata in Linux using vgcfgrestore. I will share the steps to reproduce the scenario i.e. So we had to restore LVM metadata from the backup using vgcfgrestore. Due to this all the logical volumes, volume groups and physical volumes mapped to that LVM metadata was not visible on the Linux server.

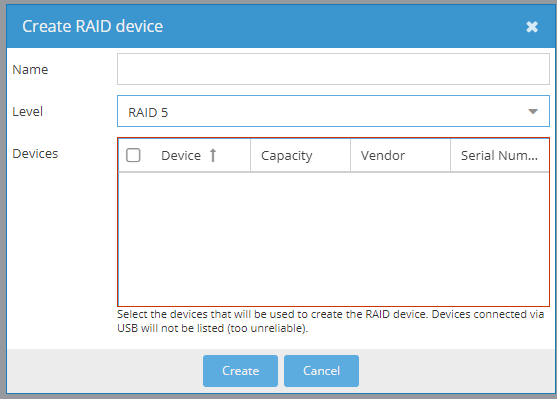

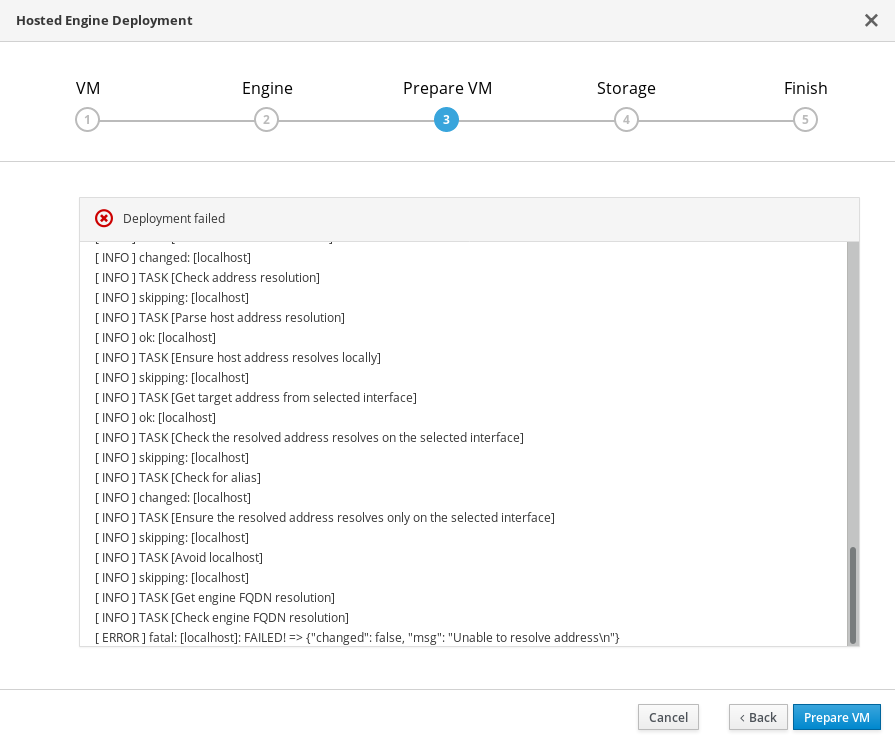

How to restore VG (Volume Group) in LinuxĮarlier we had a situation wherein the LVM metadata from one of our CentOS 8 node was missing.How to restore PV (Physical Volume) in Linux.How to recover LVM2 partition (Restore deleted LVM).Step 5: Verify the data loss after LVM2 partition recovery.Step 3: Restore VG to recover LVM2 partition.Step 2: Restore PV (Physical Volume) in Linux.Step 1: List backup file to restore LVM metadata in Linux.How to manually delete LVM metadata in Linux?.Create File System on the Logical Volume.Now all of the 8 disks are 10TB and I do not intend to do a dd of the whole disk, because that's 14hours for each disk, so I'd really appreciate if someone has an idea how to achieve a wipe. * dd if=/dev/zero of=/dev/sda bs=1M count=1000 -> no errors (obviously), Proxmox GUI after reload still shows disk as ddf_raid_member * wipefs -fa /dev/sda -> no errors, Proxmox GUI after reload still shows disk as ddf_raid_member Of course you may see that different, because Virtual Environment 7.0-8 is flawless and bug-free already. Whatever you decided, you decided wrong - because that's a bug. I can click "wipe disk", some are-you-sure-warning appears, I click yes, some progress bar appears. ZFS does not see any free disks, because all are marked as ddf_raid_member. Put some disks from an old vmware installation in there, want to create a ZFS pool on them. I/O size (minimum/optimal): 512 bytes / 512 bytes Sector size (logical/physical): 512 bytes / 512 bytes I could do wipefs from CLI but works only if I add -a and -f flags.īack to web gui, I tried again and get the same error.ĭisk /dev/sdb: 200 GiB, 214748364800 bytes, 419430400 sectorsĭisk model: VBOX HARDDISK => As you can see, I was testing in VirtualBox. I have tried to wipe out an HDD which I previously using as Ceph OSD drive and get this message:ĭisk/partition '/dev/sdb' has a holder (500)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed